As the world adapts to an era of AI transformation, many privacy teams find themselves assigned new AI governance responsibilities. While the change can be daunting, it also brings opportunity. CISCO reports that 90% of companies have expanded their privacy programs in order to support AI governance initiatives.

For the purposes of this guide, we’ll cover AI governance as it relates to your organization purchasing and adopting AI tools from third-parties. We’ll cover AI governance for developing products your organization sells in a later article.

What is AI Governance?

AI Governance is a term that can be used broadly and with ambiguity. By definition, AI governance is the application of rules, processes, and oversight to ensure the use of AI is both ethical and compliant. Many organizations are still figuring out who owns AI governance, but the privacy profession’s accumulated technical skills and legal compliance requirements make a strong argument to align AI with data privacy work. Whether you’ve already been assigned AI as a core responsibility or you’re making the case to expand your resources in order to unlock further AI innovation at your organization, this guide will help you build a plan for the work ahead.

Like data privacy governance, AI governance comes with an early framework from the EU inclusive of guidelines for human rights protections, risk assessment requirements, and an enforcement plan. But the EU AI Act isn’t GDPR. After GDPR, a handful of U.S. states went to work developing privacy laws of their own, the first to pass being CCPA. Meanwhile, in the same time frame since the EU AI Act was passed, nearly every state has introduced some form of AI-related legislation, for a total of over 700 bills. The scale is unprecedented. The direction is even less clear. You’re going to need a roadmap.

Regulatory Requirements

There are two primary regulations to understand when developing your AI governance strategy: The EU AI Act and the Colorado AI Act (CAIA).

EU AI Act vs Colorado AI act comparison guide

| EU AI Act | CAIA | |

| Applicable systems & processes | Broad definition of AI (a machine-based system with varying autonomy that generates outputs such as predictions, content, decisions, or recommendations) | Similar definition of AI but focused exclusively on systems that make consequential decisions and could contribute to discrimination |

| Responsible party | Both the developers and deployers of AI systems | Both the developers and deployers of AI systems |

| Core obligations | High risk systems require documented data governance, reporting, and public transparency. Lower risk systems may have other obligations such as transparency disclosures, technical documentation, and copyright safeguards. | Documented risk assessments, impact records, and consumer notices designed to provide transparency to consumers and address algorithmic discrimination. |

| Enforcement | Penalties can include fines up to 7% global turnover, enforced by EU AI Office and national authorities | Penalties can include financial remedies as enforced by the Colorado Attorney General. |

| Consumer rights | No new rights beyond fundamental rights including privacy, non-discrimination, human dignity, and autonomy. | Consumers have the right to transparency and to request a human review |

While more limited in scope, other AI regulations to understand include:

- New York City Local Law 144: Concerns use of AI in employment decisions with significant enforcement penalties attached.

- China’s Generative AI Measures: Issues obligations on registration, security review, and content controls for AI systems.

- Many U.S. state privacy laws such as Minnesota Consumer Data Privacy Act (MCDPA) and the California Consumer Privacy Act (CCPA) also issue responsibilities related to automated decision making.

AI law compliance checklist

In summary, organizations aiming for compliance with AI regulations should at a minimum:

- Perform thorough assessments before implementing new AI technologies to ensure any risks are understood and mitigated

- Ensure AI usage is transparent internally and externally

- Provide a human alternative to automated decision making upon request, or maintain a human review step in all decision making

- Offer a human review step or alternative if AI will make any meaningful decisions

Types of AI Risk

When businesses consider how an AI governance framework can address risks beyond AI-specific regulations, they are generally focused on:

- Restricted use cases: Automated decision making, profiling, social scoring, and certain other AI use cases face additional regulatory requirements or may be altogether prohibited, and once an AI system is implemented, it can be difficult to control how it is used.

- Model training: Without proper safeguards, an AI system using your organization’s data to train their model could leak personal information to other entities without consent.

- Unauthorized access: Complex AI flows can obscure data flows and make it more difficult to ensure data access is permissioned and processed only as detailed in the privacy policy. AI can also introduce a new entry point for outside attackers.

- Data minimization: Although AI doesn’t inherently create a data minimization issue, most AI systems rely on large data sets, and some AI systems can use that data to infer sensitive information. Data can also not be deleted as easily from an AI model, making data minimization all the more difficult.

- Model output: AI models can hallucinate data and citations, facilitating the spread of misinformation. Teams are also concerned with AI being applied for unethical outcomes.

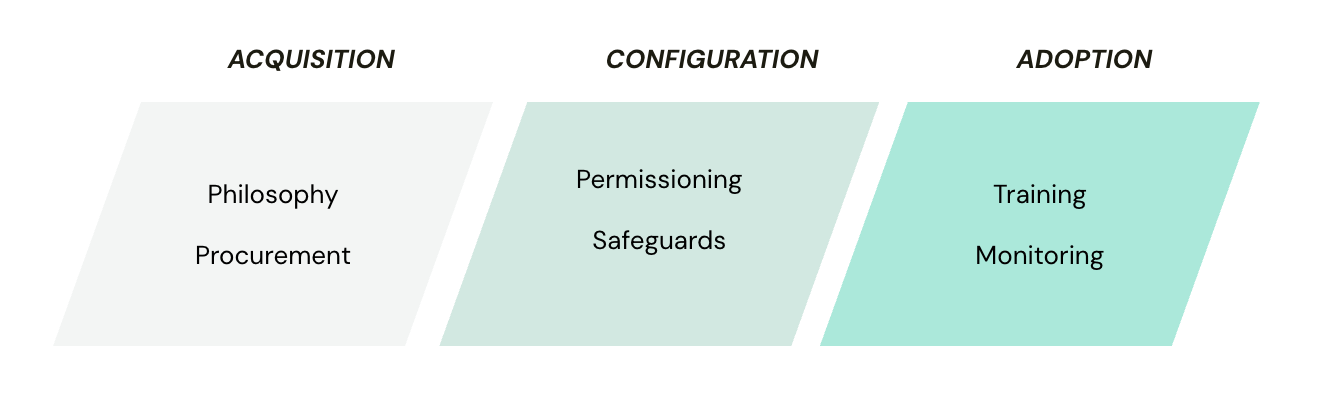

These risks can arise—and be mitigated—anywhere from procurement of an AI tool to general usage down the line. Businesses with mature AI governance functions will map these risks to AI acquisition, configuration, and ongoing use stages to identify where controls and oversight matter most.

Visualizing an AI risk journey

When designing a roadmap for AI governance work, you’re going to want to consider every stage where AI could introduce risk into your organization. While this won’t necessarily reflect the order you perform the work in, it can help you understand the scope of work required.

Acquisition

The first opportunity for AI risk comes from the AI partners and tools you select. Guided by your organization’s philosophy towards AI and demonstrated in your procurement process, you will introduce or mitigate AI risk before anyone even interacts with an AI model.

Example risk management strategies:

- Define your responsible AI principles and align on a company policy (take inspiration from the team at CB Insights), ensuring all relevant teams are reflected in the process

- Train managers and procurement teams on how to recognize AI risk during procurement (but remember that best practices are still evolving)

- Embed AI risk assessments during the procurement process (use AI for help building your assessment if needed)

- Create your own AI addendum to help negotiate clearer safety standards with vendors

Configuration

Even if you accept some risk during the acquisition phase, you can further control for that risk with a strong mitigation strategy as AI features are initially configured.

Example risk management strategies:

- Require new tools to be tested with synthetic or anonymized datasets before they are made formally available

- Validate tools exhibit their stated privacy and security controls prior to launch, e.g. confirming that training settings are disabled, data retention policies are applied, etc.

- Provision access to AI tools at the team-level to control for exposure to sensitive data

- Thoroughly document the tool’s use, safeguards, and purposes for your internal audience, and if relevant, for customers too

Adoption

As privacy managers already know, governance is not a “set it and forget it” exercise. AI tools need continuous oversight to ensure risk remains in check.

Example risk management strategies:

- Use automated scans to identify new AI capabilities

- Centralize documentation of AI capabilities so they can be effectively triaged

- Reintroduce risk assessments any time AI capabilities change or AI gains access to more sensitive data

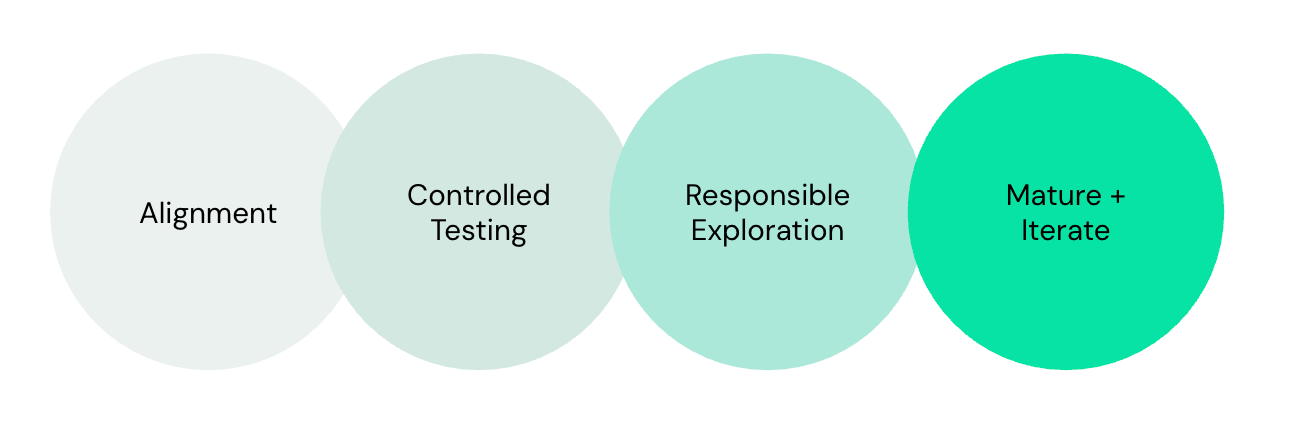

Setting priorities & enacting the roadmap

Now that you have a basic understanding of where AI could present risk, it’s time to create a plan and take action on AI governance. Here’s our recommendation:

Phase 1: Alignment

Day 0 – 90

Before you can do anything, you need to ensure your company is aligned on why AI governance is important and what you will accomplish. Ask:

- Why do we need AI governance?

- In the context of our industry and our existing data governance, how much risk can we tolerate in the interest of AI transformation?

- Who is ultimately accountable for AI governance?

Ensure every member of your leadership team is aligned on the responses and confirm that alignment through written AI use principles and policies.

This is also the stage where organizations develop a cross-functional AI Governance Council or similar group to oversee AI operations at the organization. Whether the responsibility is assigned to one team or a committee, identifying a clear executive sponsor is critical.

Recommended Reading During Phase 1:

- Responsible AI Use Principles & Policy Guide and Worksheets

- GrailCast Live Ep. 2 Rewind: The Responsible AI Governance Blueprint ft. Lauren Nardoni

- Who Owns AI Governance?

- Ten Steps Ahead of Privacy Regulation: GoFundMe

Phase 2: Controlled Testing

Day 30 – 120

Start by proactively identifying one or more AI vendors you feel highly confident align with your new AI principles and policy. Use these systems as a learning opportunity to help you understand what mitigation measures are most important for your organization and who is needed to support them.

If you’re not sure where to start, try starting with your own privacy team! Used well, AI can help scale the work of privacy teams without introducing new risk to your business. Trying out AI for yourself is a great way to deepen your understanding of both its opportunities and limitations.

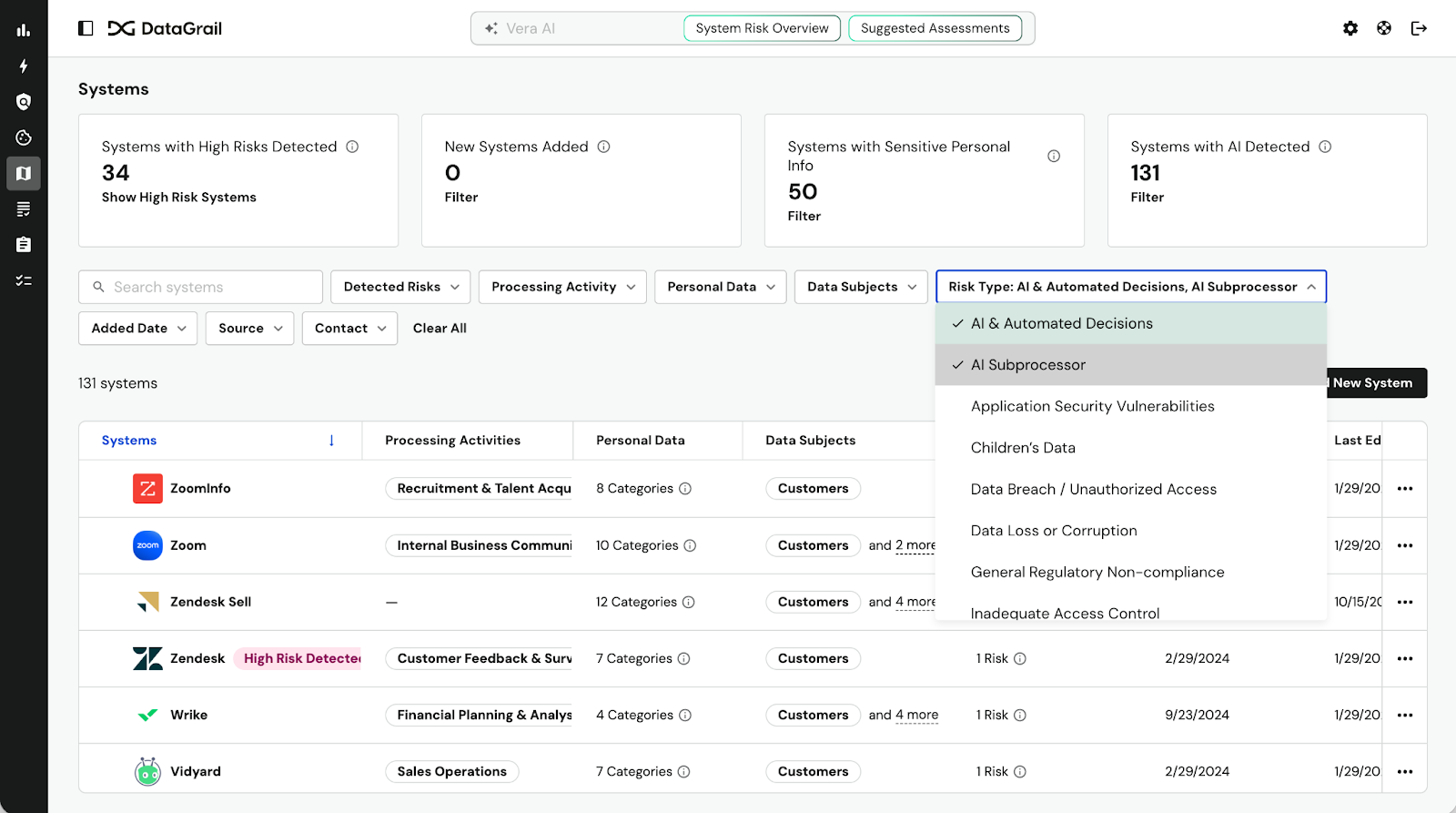

Alternatively, you may find that despite your best efforts, your organization is already using AI. If you’re a DataGrail Live Data Map user, you can filter your system inventory to reveal systems in your tech stack with AI capabilities.

Check in with system owners to confirm whether AI capabilities are enabled and any safeguards in place. After addressing any immediate concerns, you can also compare the AI system against the principles you established earlier, iterate on your policy as needed, and confirm the right people are involved with a real use case.

Recommended Reading During Phase 2:

Phase 3: Invite Responsible Exploration

Day 90 – 180

When you’re ready, it’s time to prepare for organization-wide adoption. If you’re a DataGrail Assessments customer, your first step will be to finalize an AI risk assessment and incorporate it into your procurement process. This documentation is a regulatory requirement and best to start as soon as teams begin requesting their own AI tools.

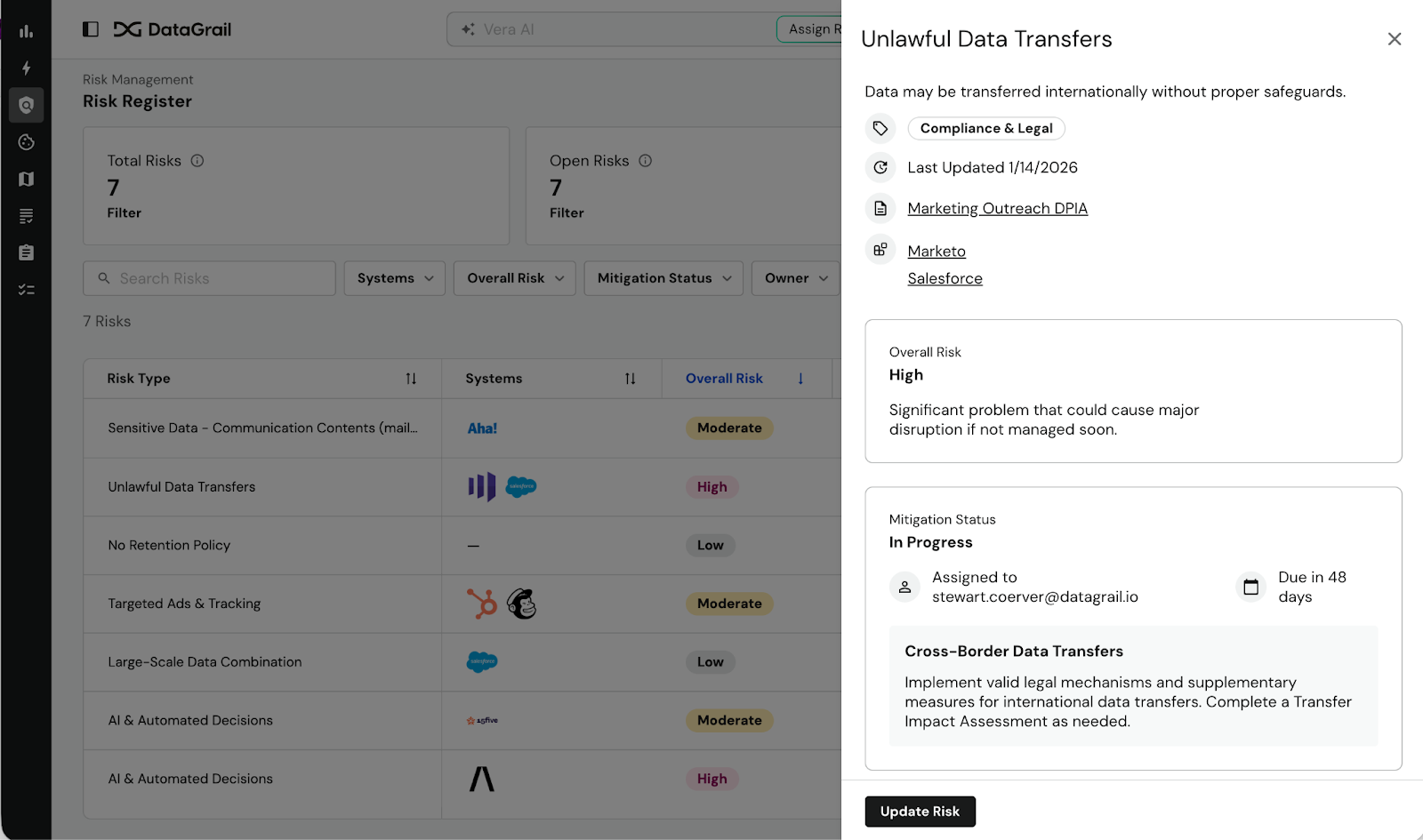

DataGrail can reveal potential privacy risks using system meta data right on the risk assessment, educating contributors on risks they may not even have been aware of. Once you approve the assessment and any related mitigation measures, they’ll also appear centralized on DataGrail Risk Register.

Also take time to revisit user disclosures during this stage. Several state privacy laws require that any use of automated decision making is transparently identified to consumers, including providing a basic explanation of the model’s logic.

Recommended Reading During Phase 3:

- Risk Assessments for Every Regulation: A Beginner’s Guide

- A Privacy Manager’s Guide to AI Procurement

- DataGrail for AI Governance

Check out how LastPass uses privacy impact assessments to accelerate responsible AI innovation.

Phase 4: Mature and iterate

Day 180+

Now that your organization has begun adopting AI in earnest, it’s time to take a step back and monitor the risk. Using Risk Register, you can accomplish this through AI-powered insights tracking AI capabilities and mitigation strategies across systems and processing activities. Risk Register creates an auditable record that you can also use to strategically inform new risk mitigation efforts.

For example, you might decide to introduce a new standardized AI Addendum to your Data Processing Agreements (DPAs) requiring certain risk controls of your third-party vendors, or you might decide to regularly re-assess existing AI vendors.

Regularly revisit AI tools security and access controls to ensure proprietary and personal data is protected. Risk mitigation measures for AI tools will need to be revisited periodically since models evolve over time, growing more complex while losing memory of earlier rules. You can also train your organization on these risks so that they can help catch early signs of model drift.

Recommended Reading During Phase 4:

- GrailCast Live Ep. 1 Rewind: AI, Privacy, & the Future of the Web ft. Ty Sbano

- DataGrail Risk Register

Frequently Asked Questions

If you just need a quick refresh, here are the most important things to know when it comes to AI governance.

Which regulations require AI governance?

The EU AI Act, the Colorado AI Act, and several other regulations mandate certain AI governance activities.

What are the most common challenges in AI governance?

So far, public spectacles on AI governance failures have highlighted:

- Lack of consent or sufficient transparency for consumers on how AI will process their data

- Insufficient controls for preventing the AI system to share personal data

- Unreliable model outputs created by feeding AI outdated or inaccurate data without human oversight or options for intervention

Why should organizations prioritize AI governance?

Consumers want to understand how their data is used, and the technical nature of AI can be a barrier. Companies with strong AI governance benefit from greater consumer trust while avoiding fines and legal action related to data privacy, cybersecurity, and/or discrimination.

What should an AI governance program include at minimum?

At their most basic, AI governance programs need a risk assessment process, internal and external documentation of AI usage and controls, human substitutions for automated decision making, and an audit trail of all of these efforts.