Risk assessments like data protection impact assessments (DPIAs) and privacy impact assessments (PIAs) before beginning a potentially high risk processing activity aren’t just a best practice, they’re a legal obligation under GDPR, CCPA/CPRA, and many other regulations.

Fortunately, you don’t need to create completely separate risk assessments for every privacy law. Build a serious practice of evaluating risky projects thoroughly, ensure your insights are genuinely applied towards risk mitigation, and regulatory compliance will naturally follow.

Most regulations do not provide an exact script or template for privacy risk assessments, but do identify their minimum requirements. This gives you the flexibility to design a risk assessment process that genuinely meets the needs of your unique organization. Let’s get into it.

Why do I need privacy risk assessments?

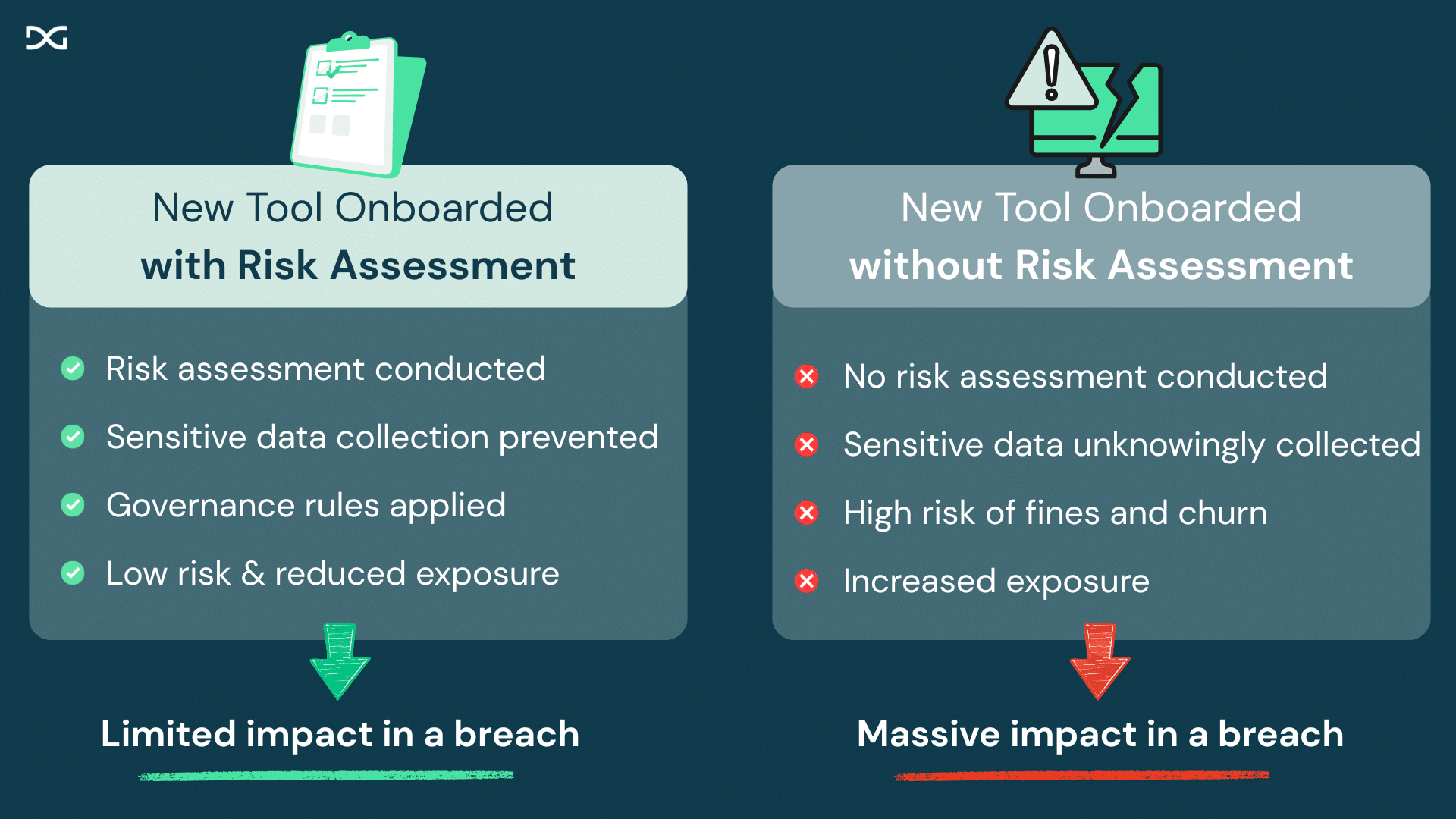

Taking the time to assess privacy risk before starting a new processing activity gives you the opportunity to mitigate that risk, improving safety for the consumer as well as your company’s own brand reputation.

If the practical application of risk assessments does not inspire your organization, keep in mind that risk assessments are already a legal requirement for major privacy laws like GDPR in the EU and California’s CCPA.

These requirements are not decorative. Here are just a few examples of companies that have been penalized for failure to adequately complete risk assessments, among other violations:

- Clearview AI: €30.5 million fine for violations including failing to perform a DPIA before high-risk biometric data processing

- Meta: €405 million fine for failing to complete risk assessments and properly mitigate risks related to children’s privacy

- (Unnamed Medical Company): €55k fine for failure to create a DPIA despite handling sensitive data

Note that up until 2026, all privacy regulations regarding risk assessments simply required documentation to be available upon request. The CCPA’s 2026 amendments are the first to require risk assessments are also formally submitted to CalPrivacy on an annual basis.

Like GDPR before it, California is also the first U.S. state to introduce a personal executive liability for failure to complete risk assessments accurately and on-time. While risk assessments have always been required before a processing activity initiates, these factors combined have added increasing pressure on privacy teams to improve their privacy risk assessment practice and documentation.

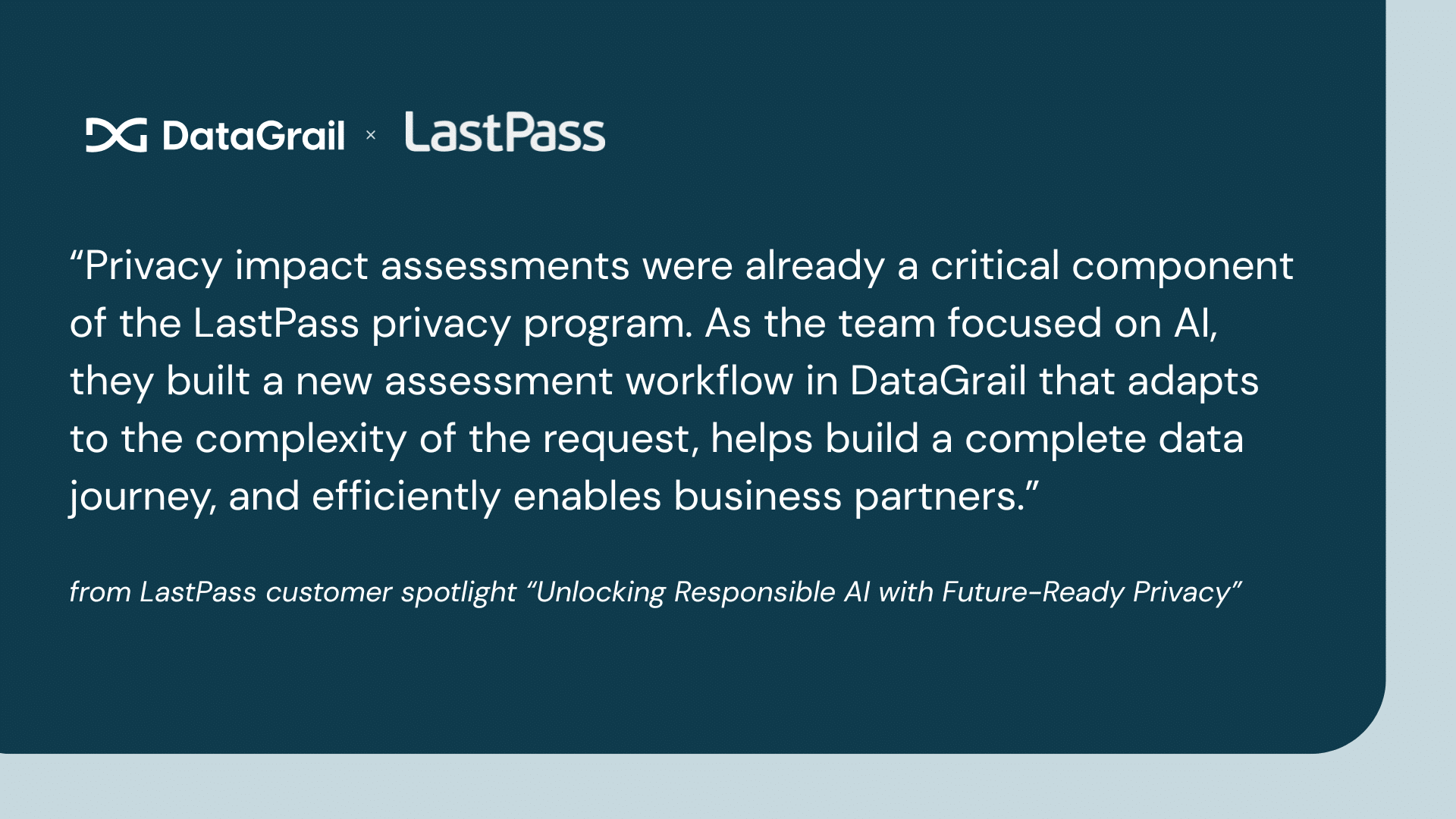

Check out how LastPass uses privacy impact assessments to accelerate responsible AI innovation.

Inventory your requirements

First, we need to understand your obligations. Compare the table below with where your company does business for a starting point.

| Location | Assessment Name* | Sample triggers | Submission Requirements |

| EU (GDPR), UK (GDPR), Brazil (LGPD) | Data Protection Impact Assessment | Activity that is likely highly risky to individuals (e.g. profiling, processing sensitive data, public-monitoring) | No routine submission, but must be available upon request and companies should consult their supervisory authority before enacting a high privacy risk that cannot be mitigated |

| Virginia (VCDPA), Colorado (CPA) | Data Protection Assessment | Activity that is likely risky to individuals (e.g. processing sensitive data) | No routine submission, but must be available upon request |

| Connecticut (CTDPA) | Data Protection Assessment; Impact Assessment | Activity that is likely risky to individuals (e.g. processing sensitive data); certain forms of profiling | No routine submission, but must be available upon request |

| California (CCPA) | Privacy Risk Assessment | Processing sensitive data, automated decision-making | Annual routine submission (first due April 1, 2028) and must be available upon request |

| China (PIPL) | Personal Information Protection Impact Assessment | Processing sensitive data, automated decision-making, sharing certain data with third-parties, cross-border data transfers | No routine submission, but must be available upon request |

| Colorado (SB24-205) | AI Governance/Impact Assessment | Potential for algorithmic discrimination | No routine submission, but must be available upon request |

| EU (EU AI Act) | Risk Assessment | Social scoring, subliminal techniques, processing biometric data, predictive policing, emotion recognition | No routine submission, but must be available upon request |

| Quebec (Law 25) | Privacy Impact Assessment | Cross-border data transfers | No routine submission, but must be available upon request |

You should always confirm your regulatory obligations with legal counsel. Your requirements may vary depending on other factors such as whether your company is considered a controller or processor of the data.

Understand assessments, requirements, and usage

In this guide, we use the term “risk assessments” to refer collectively to both privacy risk assessments and AI risk assessments. Further definitions below:

Risk assessments: An unofficial umbrella term often used, as in this guide, to refer to assessments generally.

Privacy risk assessments: A group of privacy-specific assessments. There are multiple flavors, such as the GDPR-specific DPIA (Data Protection Impact Assessment) or the US-preferred PIA (Privacy Impact Assessment), but they generally evaluate the same basic privacy risk criteria and mitigation measures. Most regulations only require privacy risk assessments for “high risk” processing activities. While different regulations define “high risk” differently, most generally align with GDPR Article 35’s “likely to result in a high risk to the rights and freedoms of natural persons.”

AI risk assessments: Designed to specifically evaluate risk related to AI and are typically additive to existing privacy risk assessment requirements, but not substitutes for them. Required by the EU AI Act, and can also be used electively.

Although AI risk assessments may include overlapping questions with a privacy risk assessment, organizations still must consider both. AI risk assessments are more technical in nature and concerned with AI-specific privacy risks. Privacy risks of an AI tool that don’t specifically relate to its AI functionality are still relevant. Your organization must complete both assessment types as either separate artifacts or together as one consolidated larger assessment addressing all requirements across both regulations.

Identify when to initiate risk assessments

Once you understand your legal requirements, it’s time to build a process around them that makes sense for your business.

While exact triggers vary by regulation, you will typically need to complete a privacy risk assessment before:

- Collecting sensitive personal information (biometric data, financial data, geolocation data, children’s data, data regarding a protected group, etc)

- Processing sensitive personal information in a new way, especially selling or sharing the data or processing sensitive personal information with AI

- Transferring data across borders

- Creating profiles of personal information

- Implementing any form of automated decision making

- Building new AI products to sell to the public

- Any use of AI that could meaningfully impact personal access or human rights (e.g. social scoring, subliminal messaging, predictive policing, emotion recognition)

Once you have identified the triggers relevant to your business, evaluate what opportunities you have to identify those triggers before processing advances.

Most mature privacy teams operationalize their risk assessments through a dedicated step in the procurement process. Depending on the organization structure and needs, risk assessments may also play a role in product review cycles, contract renewals, and other events.

How to administer risk assessments

Now that you understand your requirements, it’s time to start building the actual risk assessment process. This could be inclusive of data protection impact assessments, privacy impact assessments, AI risk assessments, transfer risk assessments, and any other privacy risk assessment you need. You’ll start by creating a questionnaire.

Building risk assessments

Unnecessarily complex risk assessments can lead to even the best-intentioned business partners delaying completing them or completing them only sparsely. When planning your risk assessment process, it’s important to keep your assessments concise and focused.

The best privacy teams limit how many unique assessment templates they use while avoiding forcing submitters to navigate through too many irrelevant fields. This can be accomplished either through the use of conditional logic to show or conceal fields based on prior responses, or through a triaging method accomplished by multiple phases of risk assessments.

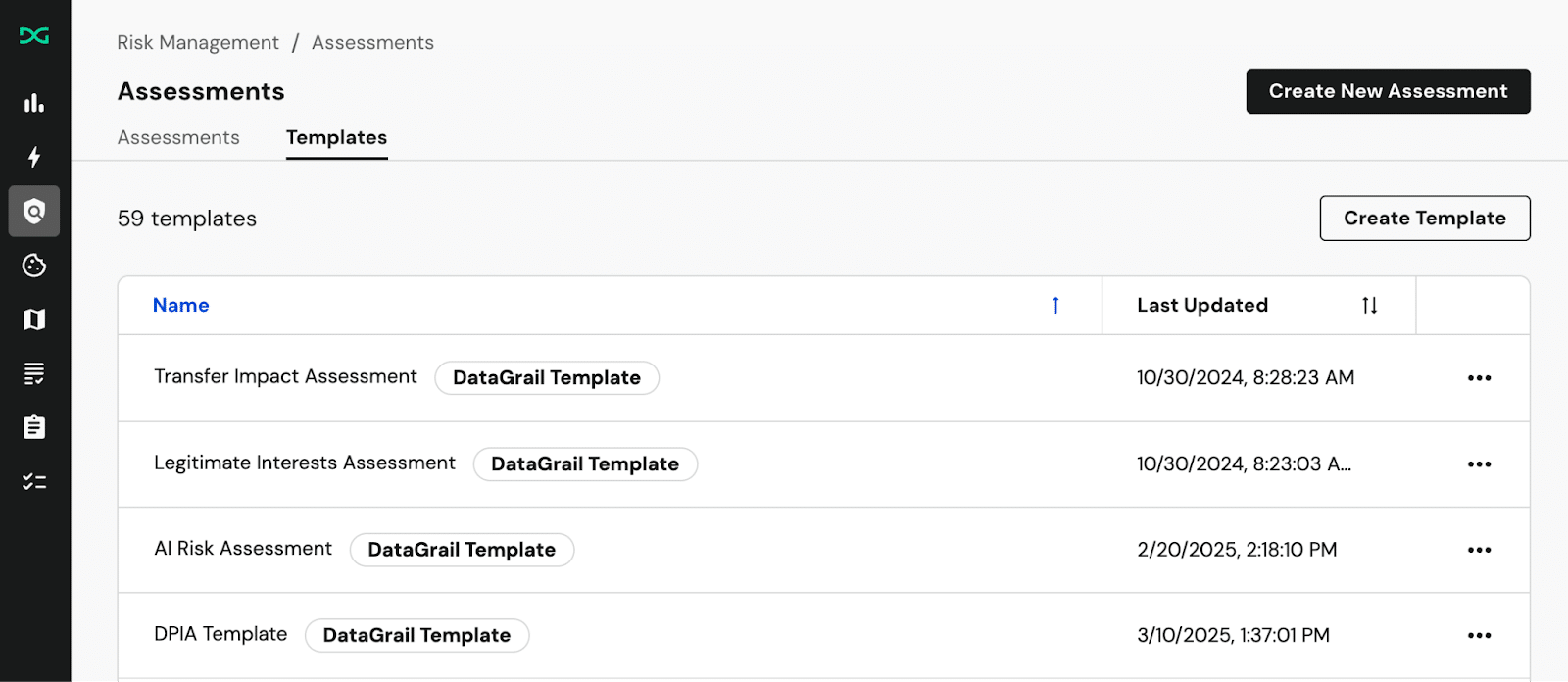

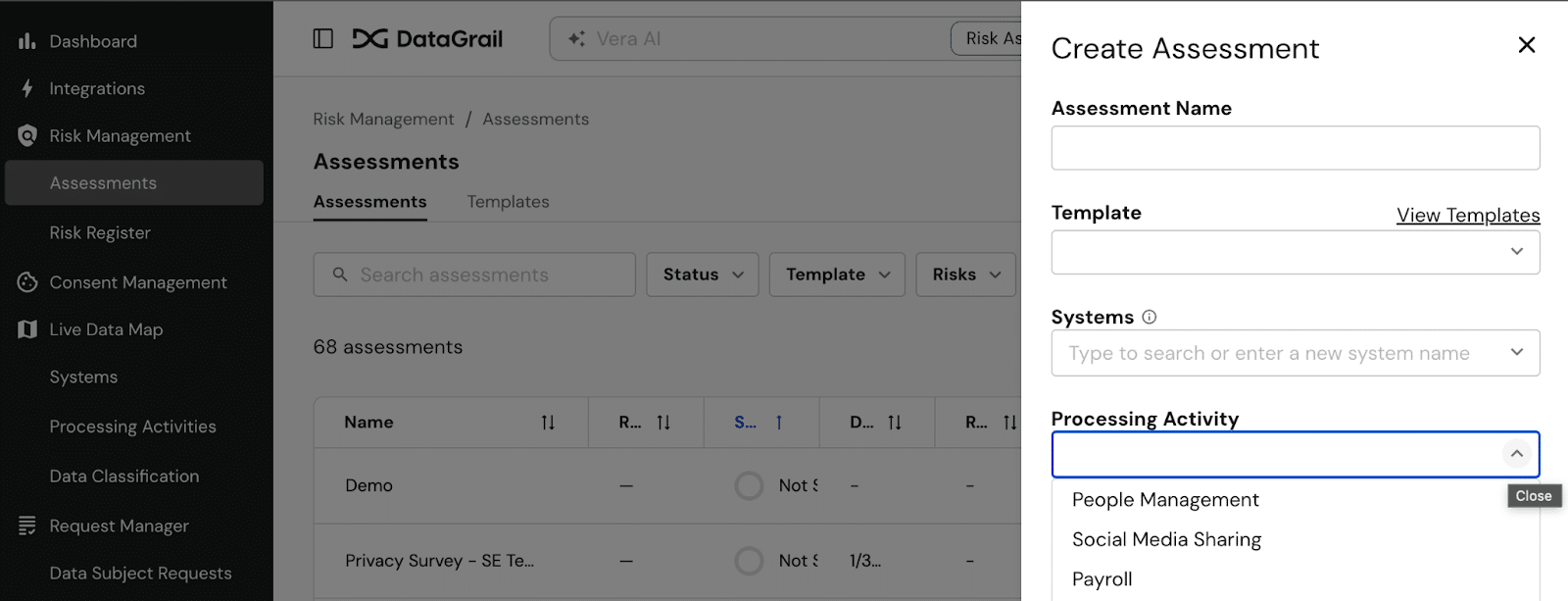

Privacy teams use DataGrail to get a head start using our template privacy risk assessments:

- Data Protection Impact Assessment (DPIA) Template

- AI Risk Assessment Template

- Legitimate Interests Assessment Template

- Transfer Impact Assessment Template

Each template covers the questions regulators want you to evaluate before the associated processing activity begins. Note that DataGrail’s DPIA template covers all GDPR requirements, but also incorporates requirements specific to U.S. state privacy laws so that you can reduce the total number of risk assessments you need to produce per processing activity.

Customizing your own risk assessment

You can also create a custom template of your own, just make sure it meets the needs of each relevant regulation. For a privacy risk assessment, use the GDPR DPIA Requirements & Template to get started, and add any relevant additional questions to cover requirements of other jurisdictions, such as those listed in the CCPA Risk Assessments Guide. If you want to consolidate your privacy risk assessments, you need to ensure they reflect the tightest and most stringent interpretation of all relevant privacy laws.

When do I need more than the risk assessment?

Some risk assessment types require additional related artifacts attached to their risk assessment.

- GDPR requires documentation of a Record of Processing Activities (RoPA) in addition to risk assessments. GDPR also requires a Residual Risk Consultation Record documenting consultation with the supervisory authority under Article 36 if high risk remains even after mitigation attempts.

- The EU AI Act requires Conformity Assessment Documentation alongside a basic AI risk assessment, and may also require a Fundamental Rights Impact Assessment for deployers of certain high-risk AI systems.

- CCPA/CPRA requires an Executive Attestation signed certification for each risk assessment and, when all risk assessments are submitted collectively for the year, a Submitted Risk Assessment Summary.

While not always explicitly required, regulators also expect to see documented risk mitigation plans and action registers, assessment review and approval records, and records of re-evaluation after processing changes. DataGrail Assessments provide this audit trail out-of-the-box.

Tips for making risk assessments intuitive for non-privacy contributors

Privacy questions will typically be answered first by one of your stakeholders. For example, if an HR operations professional is requesting a new people management tool, they might complete a risk assessment during procurement, or answer a few questions as part of a separate form that could then alert the privacy team that a full assessment was required.

But not everyone at your company will begin as a privacy expert, and you can’t be confident they would evaluate privacy risk in the same terms as the privacy team. In addition to keeping the questionnaire concise, you can support your teammates with these tips:

Automate risk assessments

DataGrail Assessments use AI to help pre-fill form questions with up-to-date system information from Live Data Map. DataGrail can reveal potential privacy risks using system meta data. This dramatically expedites the submission process and can be more accurate than responses the requester may provide.

In some cases, your stakeholders may even learn about privacy risks they weren’t aware of while completing their privacy risk assessment! A more efficient form both improves compliance and keeps stakeholders collaborative.

Educate stakeholders

Aim to train your team not just on the steps required of them, but the bigger picture “why” behind risk assessments. Your colleagues need to understand what you’re looking for in order to efficiently complete your assessments.

Prepare your stakeholders that the assessment could discover additional privacy risks than they realized. Ensure your training addresses what the stakeholder can do to identify and enact appropriate mitigation strategies.

Process risk assessments

While approving assessments, the privacy team has several basic responsibilities:

- Confirm known risks and relevant mitigation measures are in place and documented. This could include recommending common privacy best practices such as data minimization or anonymization to reduce risk.

- Compare risks against benefits. Regulators generally expect organizations to assess necessity, proportionality, and whether identified risks are appropriately mitigated in light of the purpose of the processing.

- Ensure accuracy. For an increasing number of jurisdictions, privacy leaders (such as Data Protection Officers) or even members of the executive team must personally attest that risk assessments were completed on-time and accurately. The assessment reviewer is the first line of defence.

Monitor risk across your ecosystem

Privacy regulations require risk assessments to ensure your organization thinks critically before initiating a risky processing activity, but ultimately this requirement is intended to serve a broader goal of responsible data governance.

- GDPR Article 30 requires maintaining a detailed and current Record of Processing Activities (RoPA). Your risk assessments should give you a head start on your RoPA and ensure it is kept up to date.

- Most privacy laws impose heightened requirements for processing sensitive data, which may include consent or another permitted legal basis. Shadow IT and shadow AI can obfuscate your data flows and leave your risk status unclear. Maintaining close oversight of your assessments and their intersecting risks can help you get ahead of these concerns and advocate for privacy-by-design across your institution.

With DataGrail Risk Register, these steps are automated. Risks discovered in your privacy risk assessments are centralized and tracked across your tech stack. Review recommended risk mitigation strategies and track their progress from the same space.

By making privacy risk assessments actionable in this way, assessments can be so much more than a compliance check box. Assessments stay meaningful longer and fuel a more proactive compliance posture across the organization.

Read how MoonPay centralizes risk tracking and applies automation towards risk management.

Frequently Asked Questions

If you just need a quick refresh, here are the most important things to know when it comes to creating a risk assessment process.

Which regulations require privacy & AI risk assessments?

GDPR, CCPA, VCDPA, CTDPA, CPA, PIPL, LGPD, and several other regulations require risk assessments as part of privacy compliance.

When applicable:

- GDPR and the EU AI Act require data protection impact assessments and (AI) risk assessments

- GDPR-UK and LGPD require data protection impact assessments

- VCDPA requires data protection assessments

- CTDPA requires data protection assessments and (AI) impact assessments

- CPA requires data protection assessments and AI governance/impact assessments

- CCPA requires privacy risk assessments

- PIPL requires personal information protection impact assessments

- Quebec Law 25 requires privacy impact assessments

All of these are forms of privacy risk assessments or AI risk assessments.

What can happen if my organization does not complete risk assessments?

Failing to complete accurate risk assessments in a timely manner risks regulatory penalties, fines, enforcement actions, and in some cases, personal liability.

- To date, failing to complete risk assessments has been collected with other privacy violations to incur fines as high as €405 million.

- Both GDPR and CCPA hold executives personally and legally accountable for the accurate and timely completion of risk assessments, meaning that in extreme cases, a non-compliant individual could face personal liability. Depending on the jurisdiction of the complaint, this could even include criminal penalties.

Can small and mid-size businesses be fined for not completing risk assessments?

Yes. While many popular stories of GDPR enforcement focus on very large companies, note that risk assessment requirements are not exclusive to enterprise businesses.

Such as in this case where a company was fined €55,000 related to a number of privacy issues including risk assessments, GDPR fines often do not name their recipients publicly, making them somewhat difficult to tabulate by size.

Additionally, California has historically pursued mid-market companies just as aggressively as enterprise. Now that California’s privacy assessment requirements are enforceable, mid-size companies should observe CCPA privacy assessments carefully.

When do risk assessments need to be completed?

The risk assessment should be completed, processed, and approved before the processing activities begin. Most regulations also require the risk assessment be repeated if the processing activity notably changes in some way.

For most regulations, risk assessments only need to be shared with regulatory authorities upon request. Under CCPA, however, risk assessments must also be submitted annually. The first CCPA risk assessment deadline is not until April 1, 2028, but all processing activities initiated or updated on or after January 1, 2026 must be submitted by that date.

What type of activities require risk assessments?

While exact activities that necessitate a risk assessment can vary by regulation, generally organizations should complete a risk assessment prior to initiating a processing activity that could create high privacy risk for consumers. This includes:

- Collecting sensitive personal information (biometric data, financial data, geolocation data, children’s data, data regarding a protected group, etc)

- Processing sensitive personal information in a new way, especially selling or sharing the data or processing sensitive personal information with AI

- Transferring data across borders

- Creating profiles of personal information

- Implementing any form of automated decision making

- Building new AI products to sell to the public

- Any use of AI that could meaningfully impact personal access or human rights (e.g. social scoring, subliminal messaging, predictive policing, emotion recognition)

What types of privacy risk assessments are there?

General privacy risk assessments go by many names, such as data protection impact assessment (DPIAs), privacy impact assessments (PIAs), and more. The purpose of these assessments is basically the same, the difference in naming is less relevant to the substance of the assessment and more relevant to how the risk assessment is regulated in its jurisdiction.

Some privacy teams create risk assessments focused on specific use cases such as AI Risk Assessments, Transfer Impact Assessments, or Legitimate Interest Assessments. This is especially important in the case of AI Risk Assessments where a variety of technical questions must be answered to fully understand privacy risk.

Is there a template I have to follow for risk assessments?

There is no official mandatory risk assessment template, but GDPR does provide an example. DataGrail also offers four risk assessment templates.

How can I ensure risk assessments are completed accurately?

Ensure assessments are easy to understand and efficient to complete.

Use DataGrail Assessments to:

- Build your templates with conditional logic so that stakeholders never have answer an irrelevant question

- Let AI pre-fill form questions with up-to-date system information from your tech stack

- Add multiple contributors so everyone shares a single source of truth

Where can I learn more about DataGrail privacy assessments?

Request a demo or take an independent tour today.

Further Reading

CCPA Risk Assessments: A Practical Guide for Privacy Teams

DataGrail’s Risk Register Platform

On-Demand: DataGrail in Action: Risk Register Product Demo

On-Demand: Building Better RoPAs: How to streamline your RoPA and uncover AI risk