Guardrails & Governance: A Quick Start Guide to AI Agents for Privacy Teams

I regularly meet with Chief Legal Officers and Chief Information Security Officers who want to experiment with AI agents, but they have no idea where to start. This isn’t a tooling gap. It is a governance gap. So I created a one-page guide to applying AI agents to privacy work.

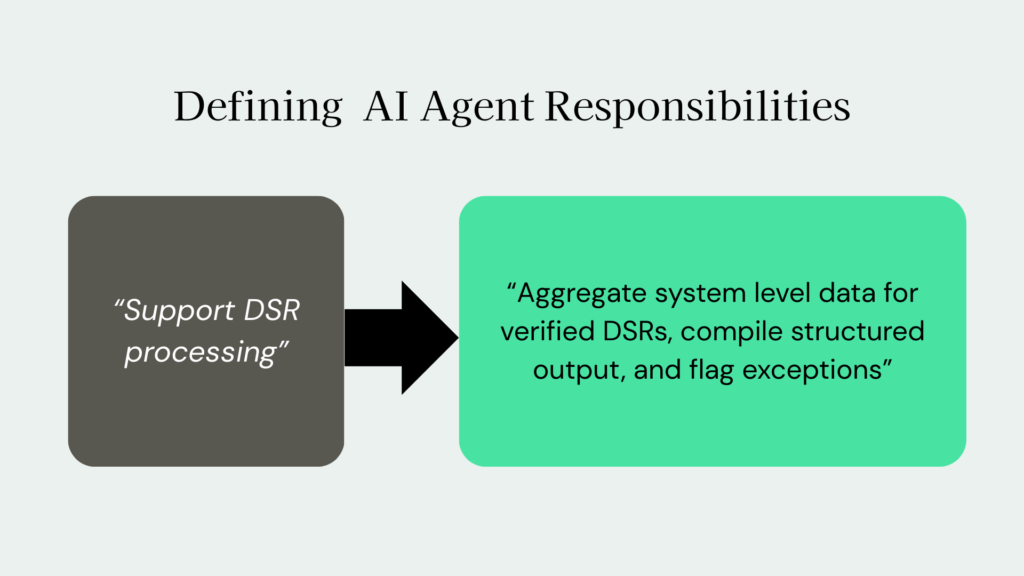

Step one: Define the agent’s job to be done

An agent is not just “AI help.” Like any other role on your team, identify its expected responsibilities and output.

Before deployment, document:

- The specific workflow the agent owns

- The systems it interacts with

- The inputs it can access

- The outputs it is allowed to generate

- The business objective it supports

This mirrors how mature privacy programs automate DSR workflows and data mapping with precision, not guesswork. If the scope is vague, risk increases. If the scope is narrow and measurable, risk decreases.

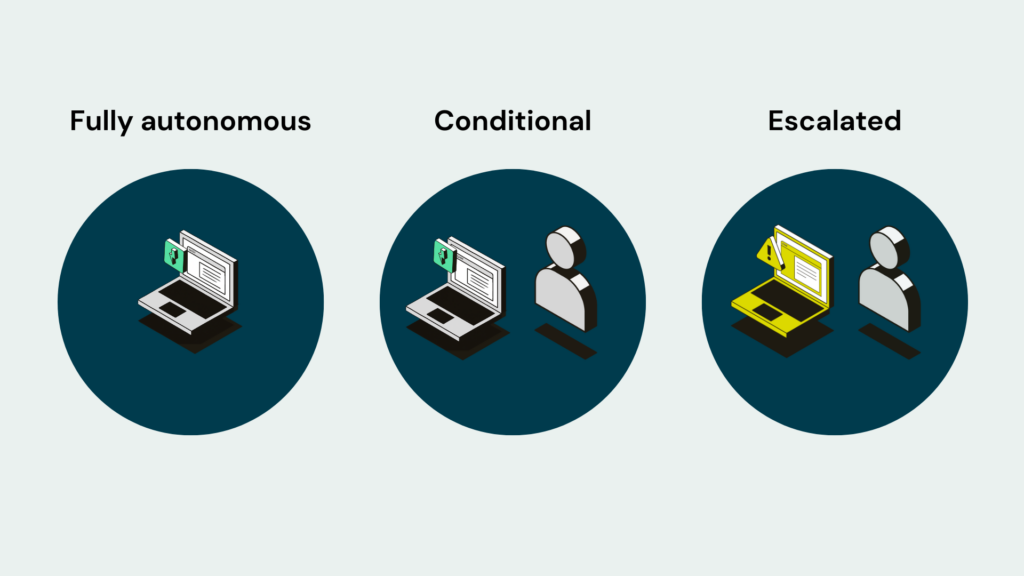

Step two: Establish decision boundaries

Every agent needs a decision matrix.

Document three categories:

Fully autonomous decisions

Tasks the agent can complete end to end without review.

Examples:

- Deadline tracking

- Status updates

- Pre populating assessment templates

- Flagging missing metadata

Conditional Decisions (Human Approval Required)

The agent proposes. A human approves.

Examples:

- DPIA risk scoring above defined thresholds

- Denial of a data subject request

- Consent rule changes affecting tracking behavior

Escalation Triggers

Automatic handoff to human oversight.

Examples:

- Cross border transfer issues

- Children’s data processing

- Sensitive category data detection

- Conflicting regulatory interpretations

Without defined thresholds, nothing scales. Security leaders think in access control and incident thresholds. Privacy AI needs the same discipline.

Step three: Define risk thresholds explicitly

Privacy leaders might be comfortable saying “this feels risky.” Agents need more quantifiable boundaries.

Document:

- What constitutes high, medium, and low risk

- What data categories elevate risk

- What jurisdictions trigger additional review

- What regulatory obligations apply by processing activity

- What financial or reputational exposure thresholds require executive visibility

Tie this back to your existing risk register and assessment frameworks. If you cannot codify risk logic, you cannot automate responsibly. This aligns with a security first approach to automation that removes human error without creating new exposure

Step four: Lock down data access boundaries

Agents do not need universal access.

Define:

- Which systems the agent can query

- Whether access is read only or action based

- Whether data is tokenized, anonymized, or masked

- Where data is processed

- What logs are generated

Privacy AI should follow least privilege principles. If your agent requires broad, persistent access across environments, you have designed it incorrectly.

Step five: Require auditability and logging

If a regulator asked, “How did this decision get made?” could you answer?

Every agent action should be:

- Logged

- Time stamped

- Traceable to source inputs

- Linked to a policy or rule

- Reconstructable

Privacy is a defensibility discipline. Your AI operating model should generate documentation automatically, not create blind spots.

Step six: Embed human oversight by design

AI governance does not mean constant manual review. It means structured oversight.

Define:

- Who owns the agent

- Who monitors performance metrics

- How often outputs are sampled and audited

- How model drift or logic drift is detected

- What retraining or recalibration process exists

Ownership matters. In most organizations, privacy sits within Legal or Compliance, often without clear authority across systems. If no one owns the agent, risk becomes collective and therefore invisible.

Step seven: Align to business outcomes, not experiments

Agents should reduce measurable strain.

Track:

- Reduction in manual hours per DSR

- Assessment completion time

- SLA adherence

- Error rate reduction

- Escalation frequency

The goal is not novelty. The goal is moving from reactive and manual to proactive and automated. If you cannot tie the agent to efficiency, risk reduction, or defensibility, do not deploy it.

What does this change?

When privacy teams define these guardrails:

- AI removes low judgment work.

- Human leaders focus on high judgment decisions.

- Risk becomes structured instead of abstract.

- Innovation becomes defensible.

Without clarity, AI increases risk. With clarity, it strengthens your privacy program.

Privacy leaders talk about AI risk all day. The ones who will influence AI across the enterprise are the ones who operationalize it inside their own function first.

Before you deploy your next AI agent, answer the seven sections above. If you cannot fit your answers on one page, you do not have an operating model yet. And of course, always check your company’s AI security policies.

And that, not the AI, is the real risk.

Looking for more resources on applying AI to privacy? Explore the AI Prompt Library. Searching for more guidance on governance? Start by creating an AI governance roadmap, or explore DataGrail for AI Governance.